Kernel Panic: The Biological Bottleneck

I am running infinite software on legacy hardware.

// SYSTEM: SIGNAL [03]

// DATE: MARCH 1, 2026

// EST: 10 MIN READ

I am withdrawing a bit from the physical world. Not out of isolation, but out of curiosity. The friction between having an idea and deploying a tool has plummeted and is approaching zero very fast. The resulting acceleration is triggering a biological system failure.

By day, I work in banking regulation - a world of risk frameworks, compliance structures and high-stakes precision. It demands 100% of my cognitive “RAM”. When I clock out late, I am left with a finite amount of bandwidth. Standard social convention dictates a retreat to the bar to decompress, watch a movie or do some sports. That’s what I have exclusively done in the past.

But the capabilities of AI just keep on compounding on an exponential curve with mesmerizing speed and unfathomable implications. And I am a curious. My mind is live-wired and buzzing all the time with new ideas that I want to expore.

And for the first time in history, the machine is the perfect intellectual sparring partner: available, knowledgeable and tireless. But this “limitless” access to knowledge has revealed a critical structural flaw. The bottleneck isn’t the GPU. The bottleneck is me.

Here are my observations on what happens when you try to run light-speed software on a biological motherboard.

> root/signal/builders-high

1. The Builder’s High (The Post-Christmas Leap)

I have always loved the idea of coding, but syntax was a friction. I knew some R, SQL, Python and C#, but I was really more of a slow coder - very intermediate at best. Now, that friction is gone and I have ventured into a state of “toyful exploration” since mid-2025.

However, it is important to emphasize that a fundamental step-change occurred late last year. Around Christmas 2025, AI systems went from being helpful autocomplete tools to autonomous orchestrators. I have already been an avid user before, but only recently have become fully immersed in the capabilities of the latest AI tools as the models demonstrated a sudden leap in long-term coherence and tenacity, powering through large tasks in a way that completely disrupted the default programming workflow.

In the last few months alone, I haven’t just “read” about tech. I have built my own infrastructure:

The Portfolio Architect: I spun up my own portfolio management toolkit - automating diversification analysis, return attribution and factor loadings.

The Bio-Hacker: I got annoyed with Garmin’s subscription policy. So, I built a whole suite of analytic metrics replicating their paid tier and created a webcam analyzer that tracks my facial features during meetings to measure my heart rate via photoplethysmography (PPG) and estimates my stress load in real time.

The Algo-Trader: I am currently prototyping algorithmic trading systems to audit my ideas about various trading strategies and learning a lot more about statistics now than I did in college.

I am essentially codifying my own existence. The “delight” is visceral. But the speed at which I am building has triggered a massive compute mismatch.

> root/signal/semantic-focus

2. From Syntax to Semantics

Nvidia CEO Jensen Huang made headlines in xxx with a stark prediction for the industry:

“It is our job to create computing technology such that nobody has to program … The programming language is human.”1

He is right. But he doesn’t mean building is over. The opposite of it is likely true. We are just getting started. I mostly don’t “write” code myself anymore. I direct it. My role has shifted from mason (laying bricks of syntax) to architect (designing the cathedral). And I am not alone.

Boris Cherny, the inventor of Claude Code, recently admitted he hasn’t written or edited a single line of code in months.2 Andrej Karpathy also echoed this sentiment, stating:

“You’re not typing computer code into an editor like the way things were since computers were invented, that era is over. You’re spinning up AI agents, giving them tasks in English and managing and reviewing their work in parallel.”3

That is mirroring my very own experience. I am now programming in English. I am prompting multimodal using both text, voice, English, German, screenshots of my notes, images. I am then debugging in logic.

This is what Peter Steinberger refers to as “Agentic Engineering”. You are still in the driver’s seat. The agent doesn’t build random things; it builds your vision.4

The competitive advantage in this new labor market isn’t memorizing syntax. It is workflow architecture.

How do you set up the global rules?

How do you codify repetitive workflows?

How do you ensure perfect pre-task conditions for extended agentic work sessions?

How do you verify the output?

> root/signal/code-explosion

3. The Cambrian Explosion

Again, this isn’t just me. We are standing at the precipice of a massive inflation in code supply. Cloudflare stated another staggering prediction back in March 2025:

“More code will be written in the next 5 years than has been cumulatively written in all of programming history.”5

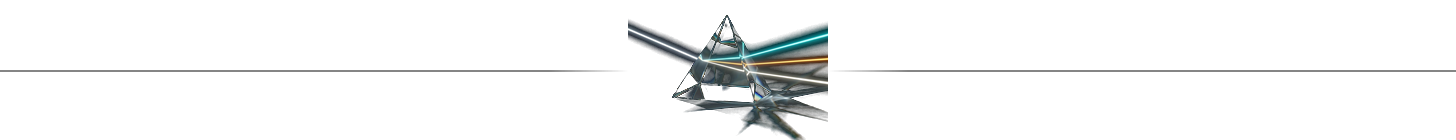

This is already playing out in real-time, with GitHub reporting massive spikes in commits and new repositories directly tied to AI-assisted development. Among other AI coding tools, Claude Code is rapidly gaining momentum - a mind-bending fact given that the tool just celebrated its 1-year anniversary last week.6

[!WARNING] The Jevons Paradox of Code

As the cost of software development plummets to near zero, the demand for software doesn’t plateau - it explodes. Latent demand is unlocked.

Millions of “non-coders” are becoming builders. We are going to see a flood of “personal software” - tools built by one person, for one person, to solve one specific problem. My “Garmin killer” app doesn’t need to scale to 10 million users. It just needs to work for me.

Every computer is rapidly becoming a prompt terminal. Everything is (and will be) code. We have reached the era where code is the world.

> root/signal/cache-miss

4. The L1 Cache Miss (The Biological Cost)

But this infinite creative power comes with a biological tax. I call it the L1 cache miss.

According to cognitive neurology (specifically the work of Nelson Cowan), the human “working memory capacity” - our active focus of attention - is limited to roughly 4 highly abstracted concepts (chunks) at any given time. (Chunks in this context doesn’t translate into token, but are much harder to quantify and represent facts or complex contexts that our human brain allows for due to amazing abstraction capabilities and holding these in our “working memory”)

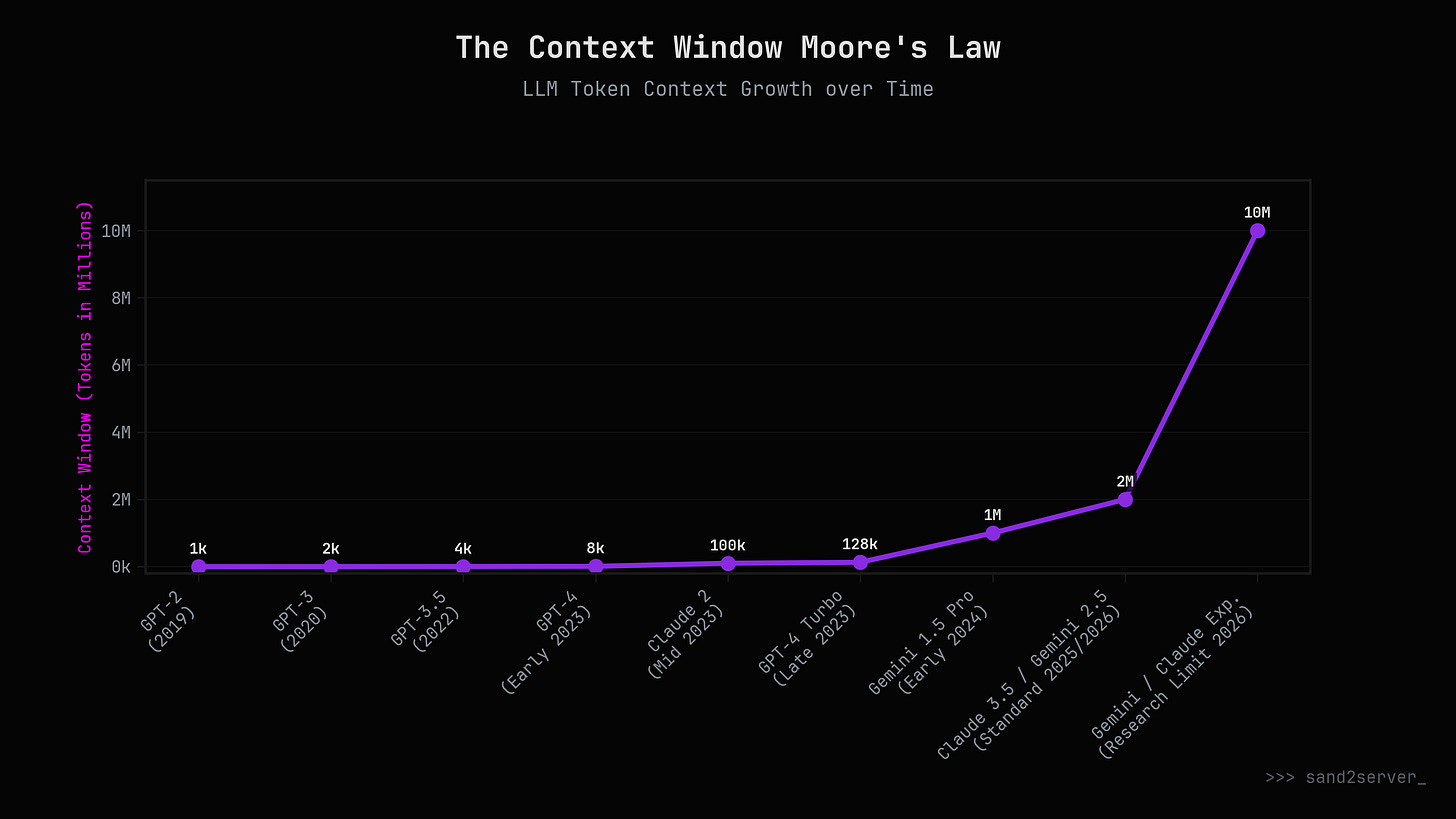

By contrast, Gemini 3.1 Pro or Claude 4.6 Opus doesn’t need a biological swap file. They can hold millions of raw, un-abstracted tokens (representing thousands of distinct concepts, entire codebases and global rules) in active, high-speed RAM simultaneously, never dropping context.

We are interfacing an infinite-context swarm intelligence with a 4-slot biological handler.

I will be deep in a “flow state”, feeling empowered, almost limitless, orchestrating three agents to build different micro-services … and then, snap.

> Sys_Interrupt: Working Memory Exceeded > ERROR 404: Concept Not Found

I forget a variable I just defined.

I stare at a decision tree and physically cannot process the logic.

I try to decide what to eat for dinner and my brain initiates a kernel panic.

My biological “swap file” is full. To process complex logic, I must constantly “thrash”. Swapping files back and forth from my slow biological hard drive (long-term memory) into my tiny 4-slot cache. The “flow” of AI is seductive because it feels like speed. While we often interpret this perception of speed as efficiency, we might be often misled by our perception as the process might often be the opposite of that. It is highly inefficient jumping between tasks, re-shifing focus. All we often might be doing in these flow states is witnessing a constant buffer overflow.

> root/signal/dopamine-slot-machine

5. The Knowledge Junkie’s Dopamine Loop

In university, “flow” was a rare, coveted state of deep work. It was hard to achieve. With LLMs, flow is the default setting. It is too easy.

Timelines are collapsing as you can go from idea to MVP or PoC in no time. Instead of mindlessly conceptualizing an idea, you just go and build your idea. Hooked by the capabilities of the models and the promise of efficiency gains, the truth might be that you are just getting “busier” than ever before.

But this isn’t the mindless scroll of a social media feed. It is a highly potent dopamine loop fueled by a voracious thirst for knowledge. I start a thread on semiconductor packaging and one curiosity leads to another. The AI hallucinates a connection, I verify it, I dive deeper. Another supply chain bottleneck uncovered. Checking on the task status of my coding agent. Suddenly, three hours have vanished. My sense of time dissolves. With it the opportunity to calm your mind. Unwind. Clear the cache. The byproducts of this biological process are phyisically building up in your body.

Why is this so addictive? Why is it so rewarding? Why do we feel like supercharging learning. It is because the LLM acts as the ultimate private tutor. You iteratively interrogate the machine. The more you build, the more you understand how to work with it. The machine uses the documentation and your past projects to learn the rules, correcting mistakes in a tight, hyper-accelerated feedback loop.

It feels like being “limitless”, but it leaves me physically exhausted, staring at a screen, wondering where the evening went at 3 AM. The drive of the “curious generalist” pushes me forward, exploring paradigms my peers aren’t even aware exist yet. Before AI, my working style was deliberate and methodical. Now, I have become radically pragmatic. I move fast and break things.

But while I am personally drowning in this self-inflicted wave of accelerated development, I look around and realize the water hasn't even reached the ankles of the broader public.

6. The Global Adoption Gap

There is a profound disconnect between those experiencing this acceleration and the rest of the world. With roughly 5.3 billion internet users worldwide, an active AI user base of ~1 billion means that only about 15% to 20% of the connected world is regularly using an LLM.7

[!NOTE] The Consumer Monetization Wall

Getting a general consumer to pay ~$20/month for a chatbot is incredibly difficult. The general TAM is massive, but their willingness to pay remains a bottleneck because their mental models are constrained by evaluating the tool as a “glorified search bar”.

While the general consumer hesitates, the builders are staying up all night, overwhelmed by the pace of progress. The capabilities are broadly not understood because people simply haven’t used them to build personal infrastructure yet.

7. The Architect’s Burden

This acceleration creates a new form of Imposter Syndrome. I look at my “heart rate tracker” app, and I think: Is this mine? I didn’t write the fast Fourier transform logic to detect the pulse. The agent did.

But then I remember the architect. Does the architect feel like an imposter because they didn’t pour the concrete? No. Their value was in the vision, the specification and the verification.

The terms “vibe coding” or “prompting” do this paradigm a heavy disservice. It implies you can just type a wish and get an app. But at the top tiers (commercial grade software enginnering), deep technical expertise is even more of a multiplier than before. A “vibe coder” can build a toy. An “agentic engineer” can arrange a system, putting agents into longer loops and removing themselves as the bottleneck entirely. Coding is only a small part of a professional software engineer’s job.

It’s not magic; it’s delegation. It requires “high-level direction, taste, oversight, and iteration”.8

I am not outsourcing my thinking; I am supercharging my synthesis. You can use AI as a crutch to degrade your intellect, or you can leverage it as an API into all of humanity’s knowledge. I choose the latter. I conciously decided to tap into the power that the technology is offering me.

To calm my thrashing mind, I must sometimes retreat to offline “legacy” tools: reading printed media without distraction or synthesizing massive inputs into these Substack articles.

A tsunami wave is coming. Of code. Of structural change. Of real world societal and economic implications. We can make forecasts now, but there is no knowing ex ante. Especially not with the pace that this technology is advancing.

The only certainty for now is that the bottleneck remains my biology. But for the first time in history, the constraint is my imagination and my stamina - not my inability to speak the language of the machine.

Are you thrashing? If you feel the swap file filling up, you’re reading Sand2Server. I’m figuring out how to survive the interface between human and machine.

// S2S

> session_terminated

> root/system_info/meta

SCOPE NOTE:

I am fully immersed in the process of “builing” for the lack of a better word. And I don’t want to “just” use the technology to build, but I want to audit the technology at the same time. How does it work? Why does it even work? And why have we just seen a step change in the capabilities of the lastest models? How can they work so well? - It’s what a full stack analyst would do. There is a lot of urgent questions that I am trying to explore for myself, but will revisit and discuss in a future article.

ABOUT: THE FABRIC

You are navigating the fabric. This stream maps the invisible mesh of network effects, biological incentives, and societal architecture. It examines the human layer where technology actually takes root - or dies trying.

Status: Active.

Focus: The sociology of adoption.

Disclaimer: Not financial advice. Do your own research and due diligence. Please refer to this page.

References

NVIDIA CEO: Every Country Needs Sovereign AI by Brian Caulfield, 2024-02-12 (NVIDIA Blog): https://blogs.nvidia.com/blog/world-governments-summit/ [Accessed at: 2026-03-01]

Head of Claude Code: What happens after coding is solved by Boris Cherny, 2026-02-26 (Lenny's Podcast - YouTube):

[Accessed at: 2026-03-01]

Andrej Karpathy on the end of coding by Andrej Karpathy, 2025-02-18 (X.com): https://x.com/karpathy/status/2026731645169185220 [Accessed at: 2026-03-01]

The Viral AI Agent that Broke the Internet - Peter Steinberger by Lex Fridman, 2025-10-15 (Lex Fridman Podcast #491):

[Accessed at: 2026-03-01]

Cloudflare 2025 Investor Day Presentation (p. 66) by Cloudflare, 2025-03-01 (Cloudflare Investor Relations): https://cloudflare.net/files/doc_downloads/Presentations/2025/03/Cloudflare-2025-Investor-Day-Presentation.pdf [Accessed at: 2026-03-01]

Claude Code is the Inflection Point by Dylan Patel, 2026-02-28 (SemiAnalysis):

[Accessed at: 2026-03-01]

Digital 2026 Global Overview Report by Simon Kemp, 2026-01-30 (DataReportal): https://datareportal.com/reports/digital-2026-global-overview-report [Accessed at: 2026-03-01]

Andrej Karpathy on the end of coding by Andrej Karpathy, 2025-02-18 (X.com): https://x.com/karpathy/status/2026731645169185220 [Accessed at: 2026-03-01]

Thanks for the note on my article! Reading this, 'The bottleneck isn't the GPU. The bottleneck is me' is exactly what I was trying to get at with the productivity trap. You gave a perfect name to the exhaustion of trying to keep up with an infinite context window. Incredible read.